Biological and Machine Intelligence |

英文と和文では、語順がちがうので、日本語直訳で S|A|B|、は、V|C|D|。 となっている場合は、

|の後に、、や。がついて、|、や|。 となっているところから、折り返して

日本語的には、B A S は、D C V。 となります。

2016.9.17 更新2016.9.19

http://numenta.com/assets/pdf/biological-and-machine-intelligence/0.4/BaMI-Introduction.pdf

●The 21st century is a watershed in human evolution.

21世紀は、人類進化の分水界です。

We are solving| the mystery of how the brain works| and starting| to build machines| that work on the same principles as the

brain|.

私達は、解きます|脳がいかに働くかというミステリーを|。そして、開始します|機械の構築を|脳と全く同じ原理で働く|。

We see| this time| as the beginning of the era of machine intelligence,| which will enable an explosion of beneficial applications and scientific

advances|.

私達は、見なします|この時を|機械知能の時代の始まりとして|有益なアプリケーションと科学の発展の爆発を可能とする|。

●Most people intuitively see| the value in understanding how the human brain

works|.

殆どの人は、直観的に見ます|人間の脳がいかに働くかを理解することの価値を|。

It is easy| to see| how brain theory could lead to the cure and prevention of mental disease or how it could lead to better methods for

educating our children|.

容易です|見ることは|いかに脳科学が精神の病気の治療や予防に導くか、もしくは、いかに脳科学が私達の子どもの教育のよりよい方法に導くかを|。

These practical benefits justify| the substantial efforts underway to reverse engineer the

brain|.

これらの実際的な利点は、正当化します|脳をリバースエンジニアしようとする進行中の実質的な努力を|

However, the benefits go beyond| the near-term and the

practical|.

しかし、利点は、ずっと超えます|短期的なものや実用的なものを|。

The human brain defines| our

species|.

人類の脳は、定義します|私達の種を|。

In most aspects we are an unremarkable species, but our brain is unique.

多くの点において、私達は、特に注目すべきことのない種です。しかし、私達の脳は独特なのです。

The large size of our brain, and its unique design, is| the reason humans are the most successful species on our planet.

私達の脳の巨大な大きさと、独特のデザインは、です|人類が地球で最も成功した種である理由|。

Indeed, the human brain is| the only thing we know of in the universe| that can create and share

knowledge|.

実際、人類の脳は、です|この宇宙で私たちが知っている唯一のもの|知識を作り出し共有することのできる|。

Our brains are capable of| discovering the past, foreseeing the future, and unravelling the mysteries of the

present|.

私達の脳は、できます|過去を発見し、未来を予測し、現在のミステリーを解明することが|。

Therefore, if we want to understand who we are, if we want to expand our knowledge of the universe, and if we want to explore new

frontiers, we need| to have a clear understanding| of how we know, how we learn, and how to build intelligent

machines to help us acquire more knowledge|.

それ故、もし私達が自分は誰であるかを理解することを望み、宇宙の知識を拡大することを望み、新しいフロンティアを探求することを望むのであれば、私達は必要です|鮮明に理解することを|私達がいかにして知り、いかにして学び、より多くの知識を得るために、いかにして知能機械を作るかについて|。

The ultimate promise of brain theory and machine intelligence is

the acquisition and dissemination of new knowledge.

脳の理論と機械知能の究極の約束は、新しい知識の獲得と普及です。

Along the way there will be innumerous benefits to society.

この道に沿って、社会への数しれない利益があるでしょう。

The beneficial impact of machine intelligence in our daily lives will equal and ultimately exceed that of

programmable computers.

私達の日常生活における機械知能の有益な衝撃は、プログラム可能なコンピュータのそれ(有益な衝撃)に、匹敵し、究極に超越します。

●But exactly how will intelligent machines work and what will they do?

しかし、厳密に、知能機械達は、いかにして働き、何をするのでしょう。

If you suggest to a lay person that the way to build intelligent machines is to first understand how the human brain works and then build machines

that work on the same principles as the brain, they will typically say, “That makes sense”.

あなたが素人に示唆するとします、知能機械を造る方法は、まず、人間の脳がどう働くかを理解し、そして、脳と同じ原理で働く機械を造るのだと。すると彼らは典型的に言うでしょう。「なるほど」と。

However, if you suggest this same path to artificial intelligence ("AI") and machine learning scientists, many will disagree.

しかし、あなたが、同じことを、人工知能や機械学習の学者に示唆するとすると、彼らの多くは同意しないでしょう。

The most common rejoinder you hear is “airplanes don’t flap their wings”, suggesting| that it doesn’t matter how

brains work, or worse, that studying the brain will lead you down the wrong path, like building a plane that flaps

its wings|.

あなたが聞くもっとも普通の返答は、「飛行機は翼を羽ばたかせない」です。これは示唆します|いかに脳が働くかは関係ない、それどころか、脳を研究しても、間違った道にひきずりこまれるだけだ、翼を羽ばたかせる飛行機を作るみたいにと|。

●This analogy is both misleading and a misunderstanding of history.

このアナロジーは、人を惑わせると同時に、歴史の誤理解でもあります。

The Wright brothers and other successful pioneers of aviation understood the difference between the principles of flight and the need for propulsion.

ライト兄弟や、飛行のほかのパイオニアの成功者達も、飛行の原理と、推進力の必要性の間の違いは理解していました。

Bird wings and airplane wings work on the same aerodynamic principles, and those principles had to be

understood before the Wright brothers could build an airplane.

鳥の翼も飛行機の翼も、同じ空気力学的原理で働きます。そして、これらの原理は、ライト兄弟が飛行機を造ることができるより前に理解されていなければなりません。

Indeed, they studied| how birds glided| and tested| wing shapes| in wind tunnels| to learn the principles of

lift|.

実際、彼らは、研究しました|いかにして鳥は滑空するかを|。そして、試験しました|翼の形状を|風洞において|揚力の原理を学ぶために|。

Wing flapping is different; it is a means of propulsion, and the specific method used for propulsion is less important| when it comes to building flying

machines|.

翼を羽ばたくことは、別のことです。それは、推進力の方法です。そして、推進のために使われる個別の方法は、そんなに重要ではありません|飛行機械を造ることに関して|。

In an analogous fashion, we need to understand| the principles of intelligence| before we can build

intelligent machines|.

同様に、私達は、理解することが必要です|知能の原理を|知能機械を造ることができるより前に|。

Given| that the only examples we have of intelligent systems are

brains, and the principles

of intelligence are not obvious|, we must study brains| to learn from them.

与えられたとすると|知能システムについて私達が持っている唯一の例が脳であり、知能の原理が明瞭ではないと|、私達は、脳を研究しないといけません|それらを学ぶためには|。

However, like airplanes and birds, we don't need to do everything the brain does, nor do we need to implement the principles of intelligence in the

same way as the brain.

しかし、飛行機と鳥のように、私達は脳がするすべてのことをする必要はありませんし、脳と同じように知能の原理を実装する必要もありません。

We have a vast array of resources in software and silicon| to create intelligent machines

in novel and exciting ways|.

私達には、ソフトウェアやシリコンチップに広範囲で大量の資源があります|知能機械を新奇で刺激的に創造するために|。

The goal of building intelligent machines is not to replicate human behavior, nor to

build a brain, nor to create machines to do what humans do.

知能機械を造ることの目標は、人間の振る舞いを複製することではなく、脳を造ることでもなく、人間の行うことを行う機械を造ることでもありません。

The goal of building intelligent machines is to create| machines that work on the same principles as the brain

— machines that are able to learn, discover, and

adapt in ways that computers can’t and brains can|.

知能機械を造ることの目標は、造ることです|脳と同じ原理で働く機械、コンピュータにはできず、脳にはできる方法で学習し、発見し、適合することができる機械、を|。

●Consequently, the machine intelligence principles we describe in this book are derived from studying the brain.

結果的に、この本で記述する機械知能の原理は、脳を研究することにより導かれるものです。

We use neuroscience terms to describe most of the principles, and we describe how these principles are

implemented in the brain.

私達は、神経科学の用語を使って、殆どの原理を記述します、また、私達は、これらの原理が脳でどのように実行されるかを記述します。

The principles of intelligence can be understood by themselves, without referencing

the brain, but for the foreseeable future it is easiest to understand these principles in the context of the brain

because the brain continues to offer suggestions and constraints on the solutions to many open issues.

知能の原理は、脳を参照することなく、それ自身で、理解することができます。しかし、予見できる(しばらくの)間は、脳の文脈でこれらの原理を理解することが最も容易です。なぜなら、脳は、多くの公開の問題の解決策への示唆や制約を提供し続けるからです。

●This approach to machine intelligence is different than that taken by classic AI and artificial neural networks.

機械知能へのこのアプローチは、古典AIや人工ニューラルネットがとったアプローチとは、異なっています。

AI technologists attempt| to build intelligent machines| by encoding rules and knowledge| in software and

human-designed

data structures|.

AI技術者は、試みます|知能マシンを造ることを|ルールや知識をエンコードすることにより|ソフトウェアや人間がデザインしたデータ構造の|。

This AI approach has had many successes solving specific problems but has not

offered a generalized approach to machine intelligence and, for the most part, has not addressed the question

of how machines can learn.

このAIのアプローチは、個々の問題を解決することに沢山成功しましたが、機械知能への一般的アプローチを提供しませんでしたし、大部分において、いかに機械が学習できるかという問いには取り組みませんでした。

Artificial neural networks (ANNs) are learning systems built using networks of

simple processing elements.

人工ニューラルネット(ANN)は、です|学習システム|単純な処理要素のネットワークを使って構築された|。

In recent years ANNs, often called “deep learning networks”, have succeeded in

solving many classification problems.

近年、ANNは、しばしば、「深層学習ネットワーク」と呼ばれて、沢山の分類問題を解決することに成功しました。

However, despite the word “neural”, most ANNs are based on neuron

models and network architectures that are incompatible with real biological tissue.

しかし、「ニューラル」という言葉にもかかわらず、殆どのANNは、基づいています|ニューロンモデルとネットワーク構造に|実際の生物学的組織とは両立しない|。

More importantly, ANNs, by deviating from known brain principles, don't provide an obvious path to building truly intelligent machines.

もっと重要なことに、ANNは、既知の脳の原理から逸脱することにより、提供しません|真の知能機械を構築する明瞭な道を|。

●Classic AI and ANNs generally are designed to solve specific types of problems rather than proposing a general

theory of intelligence.

古典AIとANNは、一般的に、設計されています|特別なタイプの問題を解くように|知能の一般理論を提供するよりも|。

In contrast, we know that brains use common principles for vision, hearing, touch,

language, and behavior.

逆に、私達は知っています|脳は視覚や聴覚や触覚や言語や動作のために共通な原理を使うことを|。

This remarkable fact was first proposed in 1979 by Vernon Mountcastle.

この特筆すべき事実は、最初、1979年に、マウントハャッスルによって提案されました。

He said there is nothing visual about visual cortex and nothing auditory about auditory cortex.

彼は言いました|視覚の大脳皮質に何ら視覚的なものはなく、聴覚の大脳皮質になんら聴覚的なものはないと|。

Every region of the neocortex performs the same basic operations.

大脳新皮質のすべての領域は、同じ基本操作を実行します。

What makes the visual cortex visual is that it receives input from the eyes;

what makes the auditory cortex auditory is that it receives input from the ears.

視覚の大脳皮質を視覚的にするものは、それが眼からの入力を受け取るからであり、聴覚の大脳皮質を聴覚的にするものは、それが耳から入力を受け取るからです。

From decades of neuroscience research, we now know this remarkable conjecture is true.

何十年もの神経科学の研究から、私達は知っています|この特筆すべき憶測が真実であることを|。

Some of the consequences of this discovery are surprising.

この発見の結果のいくつかは、驚くべきものです。

For example, neuroanatomy tells us that every region of the neocortex has both sensory and motor

functions.

例えば、神経解剖学は、教えます|大脳皮質のすべての領域は、感覚機能と運動機能の両方を持っていることを|。

Therefore, vision, hearing, and touch are integrated sensory-motor senses;

それ故、視覚、聴覚、触覚は、統合された感覚-運動の感覚です。

we can’t build systems

that see and hear like humans do without incorporating movement of the eyes, body, and limbs.

私達には、造ることができません|人間のように見たり聞いたりするシステムを|眼と、体と、手足の動きを組み込むことなしには|。

●The discovery that the neocortex uses common algorithms for everything it does is both elegant and fortuitous.

大脳皮質は、それが行うすべての事に共通のアルゴリズムを使っているという発見は、エレガントであり、思いがけないものです。

It tells us that to understand how the neocortex works, we must seek solutions that are universal in that they

apply to every sensory modality and capability of the neocortex.

それは、私達に教えます|大脳新皮質がいかに働くかを理解するためには、大脳皮質の全ての感覚のモダリティーと能力

To think of vision as a “vision problem” is misleading.

視覚を「視覚問題」として考えることは、人を惑わします。

Instead we should think about vision as a “sensory motor problem” and ask how vision is the same

as hearing, touch or language.

そのかわり、私達は、視覚を「感覚-運動問題」として考えなければなりませんし、いかに視覚が、聴覚、触覚、言語と同じであるかを問わねばなりません。

Once we understand the common cortical principles, we can apply them to any

sensory and behavioral systems, even those that have no biological counterpart.

一旦、私達が、大脳皮質の共通の原理を理解しましたら、私達はその原理を感覚や動作の任意のシステム(生体のむほうに対応するものが無い場合でも)に適用できます。

The theory and methods described in this book were derived with this idea in mind.

この本に記述される理論と方法は、この考えを心にいだいて導いたものです。

Whether we build a system that sees using light or a system that “sees” using radar or a system that directly senses GPS coordinates, the underlying learning

methods and algorithms will be the same.

私達が、光を使って見るシステムを構築しようとも、レーダーやGPS座標を直接感知するシステムを使って「見る」システムを構築しようとも、潜在する学習方法や学習アルゴリズムは、同じです。

●Today we understand enough about how the neocortex works that we can build practical systems that solve

valuable problems today.

今日、私達は、いかにして大脳新皮質が働くかについて十分理解していますので、今日、価値のある問題を解決する実用的なシステムを構築することができます。

Of course, there are still many things we don’t understand about the brain and the

neocortex.

勿論、私達が脳や大脳新皮質について知らないことはまだ沢山あります。

It is important to define our ignorance as clearly as possible so we have a roadmap of what needs to

be done.

私達が知らないことをできるだけ明瞭に定義して、何をなすべきかのロードマップを持つことが大切です。

This book reflects the state of our partial knowledge.

この本は、私達の部分的な知識の状態を映し示します。

The table of contents lists all the topics we anticipate we need to understand, but only some chapters have been written.

目次は、私達が理解する必要があると予測される全てのトピックをリストアップしていますが、いくつかの章しか書かれていません。

Despite the many topics we don’t understand, we are confident that we have made enough progress in understanding some of the core

principles of intelligence and how the brain works that the field of machine intelligence can move forward more

rapidly than in the past.

私達が理解していないトピックが沢山あるにも係らず、私達は自信があります|知能の中核原理のいくつかや、脳がいかに働くかについての理解に十分な進展を遂げたので、機械知能の分野は、過去よりも急速に前進できることに|。

The field of machine intelligence is poised to make rapid progress.

機械知能の分野は、急速な発達ができる準備が整っています。

●Hierarchical Temporal Memory, or HTM, is the name we use to describe the overall theory of how the

neocortex functions.

階層的時間記憶 (HTM)

は、私達が、大脳新皮質がいかに機能するかの全体理論を記述するために使う名前です。

It also is the name we use to describe the technology used in machines that work on

neocortical principles.

それはまた、大脳新皮質の原理で働く機械に使われる技術を記述するためにも使います。

HTM is therefore a theoretical framework for both biological and machine intelligence.

HTMは、それ故、生物学的知能と機械知能の両方の理論的枠組みです。

●The term HTM incorporates three prominent features of the neocortex.

用語 HTM

は、大脳新皮質の三つの顕著な特徴を包含します。

First, it is best to think of the neocortex

as a "memory" system.

最初に、HTM

は、大脳新皮質を記憶システムとして考えるのに最適です。

The neocortex must learn the structure of the world from the sensory patterns that

stream into the brain.

大脳新皮質は、世界の構造を、脳に流れ込んでくる感覚パターンから学習しなければなりません。

Each neuron learns by forming connections, and each region of the neocortex is best

understood as a type of memory system.

各ニューロンは、接続を形成することにより学習します。大脳新皮質の各領域は、記憶システムの一タイプと考えると最もよく理解できます。

Second, the memory in the neocortex is primarily a memory of

time-changing, or "temporal", patterns.

第二に、大脳新皮質の記憶は、主として、時間変化する、すなわち「時間的」パターンの記憶です。

The inputs and outputs of the neocortex are constantly in motion, usually

changing completely several times a second.

大脳新皮質の入力と出力は、常に動いています。通常は、毎秒数回は完全に変化しています。

Each region of the neocortex learns a time-based model of its

inputs, it learns to predict the changing input stream, and it learns to play back sequences of motor

commands.

大脳新皮質の各領域は、その入力の時間ベースのモデルを学習します。入力の流れの変化を予測することを学習します。運動の命令の並びを再生することを学習します。

And finally, the regions of the neocortex are connected in a logical "hierarchy".

そして、最後に、大脳新皮質の領域は、論理的な「階層性」で連結されています。

Because all the regions of the neocortex perform the same basic memory operations, the detailed understanding of one

neocortical region leads us to understand how the rest of the neocortex works.

大脳新皮質のすべての領域は、同一の基本記憶操作を実行しますので、大脳新皮質の領域の一つを詳細に理解できれば、残りの新皮質がいかに働くかも理解することになります。

These three principles, “hierarchy”, “temporal” patterns, and “memory”, are not the only essential principles of an intelligent system,

but they suffice as a moniker to represent the overall approach.

これら三つの原理、「階層」、「時間」パターン、「記憶」は、知能システムの本質的な原理であるだけでなく、アプローチの全体を表す呼称として十分です。

●Although HTM is a biologically constrained theory, and is perhaps the most biologically realistic theory of how

the neocortex works, it does not attempt to include all biological details.

HTM

は、生物学的に制限された理論で、大脳新皮質がいかに働くかについての生物学的に最もリアリスティックな理論ですが、生物学的なすべての詳細を含むことを試みているわけではありません。

For example, the biological neocortex exhibits several types of rhythmic behavior in the firing of ensembles of neurons.

例えば、生物学的な新皮質は、ニューロンの集合を発火させるリズミックな振る舞いのいくつかのタイプを表示します。

There is no doubt that these rhythms are essential for biological brains.

これらのリズムが、生物学的脳にとって本質的であることは、間違いありません。

But HTM theory does not include these rhythms because we don’t

believe they play an information-theoretic role.

しかし、HTM

理論は、これらのリズムは含みません。それらが情報理論的な役割を果たすとは思えないからです。

Our best guess is that these rhythms are needed in biological

brains to synchronize action potentials,

最良の推測は、これらのリズムは、生物学的脳にとっては、活動ポテンシャルを同期させるために必要だということです。

but we don’t have this issue in software and hardware implementations

of HTM.

しかし、私達は、HTM

のソフトウェア的、ハードウェア的実装においてこの問題を持ちません。

If in the future we find that rhythms are essential for intelligence, and not just biological brains, then

we would modify HTM theory to include them.

もし将来、リズムが知能、生物学的脳だけでなく、にとって本質的だとわかりましたら、そのとき、私達は、HTM理論を修正して、それらを取り込みます。

There are many biological details that similarly are not part of

HTM theory.

同様に HTM

理論の部分になっていない多くの生物学的な詳細事項があります。

Every feature included in HTM is there because we have an information-theoretical need that is

met by that feature.

HTMに含まれるすべての特徴は、その特徴によって満たされる情報科学的必要性があるからこそ、そこにあるのです。

●HTM also is not a theory of an entire brain; it only covers the neocortex and its interactions with some closely

related structures such as the thalamus and hippocampus.

HTM

はまた、脳全体の理論ではありません; それは、カバーするだけです|大脳新皮質と、{その相互作用|いくつかの密接に関係した構造物(視床や海馬のような)との|}|。

The neocortex is where most of what we think of as intelligence resides but it is not in charge of emotions, homeostasis, and basic behaviors.

大脳新皮質は、私達が知能と思っているものの殆どが住む場所ですが、感情やホメオスタシスや基本行動は担当していません。

Other, evolutionarily

older, parts| of the brain| perform| these

functions|.

他の、進化的にはずっと古い、部分|脳の|、が、実施します|これらの機能を|。

These older parts| of the brain| have been| under evolutionary pressure| for much longer

time|, and although they consist of neurons, they are heterogeneous in architecture

and function.

これらの、ずっと古い部分|脳の|、は、いました|進化の圧力のもとに|ずっと長い間|。そして、それらは、ニューロンから成るにもかかわらず、アーキテクチャや機能は、異質です。

We are not interested in| emulating entire brains or in making machines that are human-like, with

human-like emotions and desires.

私達は、興味を持ちません|脳全体を模倣することや、人間らしく人間らしい感情や欲望をもった機械を造ることに|。

Therefore intelligent machines, as we define them, are not likely to pass the

Turing test or be like the humanoid robots seen in science fiction.

それ故、知能機械|私達が定義する意味での|、は、チューリング試験を通過(合格)したり、SFでみられるヒューマノイドのようになるとは思えません。

This distinction does not suggest that intelligent machines will be of limited utility.

この区別は、示唆しません|知能機械が限定された効用しかもたないことを|。

Many will be simple, tirelessly sifting through vast amounts of data

looking for unusual patterns.

多くの知能機械は、単純で、疲れることなく大量のデータの篩い分けをするでしょう|異常なパターンを求めて|。

Others will be fantastically fast and smart, able to explore domains that humans

are not well suited for.

別の知能機械は、夢のように高速で賢く、人間にはあまりむいていない領域の探索が可能です。

The variety we will see in intelligent machines will be similar to the variety we see in

programmable computers.

知能機械に見られる多様性は、プログラム可能なコンピュータに見られる多様性と同様でしょう。

Some computers are tiny and embedded in cars and appliances, and others occupy

entire buildings or are distributed across continents.

或るコンピュータは、小さくて、車や製品器具に組み込まれており、別のコンピュータは、建物全体を占めたり、大陸間を渡って分布したりします。

Intelligent machines will have a similar diversity of size, speed, and applications, but instead of being programmed they will learn.

知能機械は、サイズや、速度や、応用に同様の多様性をもつでしょう。しかし、プログラムされるのではなく、彼らは、(自分で)学習します。

●HTM theory cannot be expressed succinctly in one or a few mathematical equations.

HTM

理論は、一行もしくは数行の数式で、簡潔に表現できるものではありません。

HTM is a set of principles that work together to produce perception and behavior.

HTM

理論は、です|一組の原理|一緒に働いて知覚や行動を産む|。

In this regard, HTMs are like computers.

この観点から、HTM

理論は、コンピュータのようなものです。

Computers can’t be described purely mathematically.

コンピュータも、純粋に数学的には記述できません。

We can understand how they work, we can simulate them, and subsets of computer science can be described in formal mathematics, but ultimately we have to build them and

test them empirically to characterize their performance.

私達は、それらがどう働くかを理解できますし、それらをシミュレートすることができますし、計算科学のサブセット(部分集合)は、形式数学で記述できますが、しかし、究極的に、私達は、コンピュータを作って、経験的に試験して、そのパフォーマンスの特性を示すしかありません。

Similarly, some parts of HTM theory can be analyzed mathematically.

同様に、HTM

理論の或る部分は、数学的に解析できます。

For example, the chapter in this book on sparse distributed representations is mostly about

the mathematical properties of sparse representations.

例えば、本書の疎分布表現に関する章は、殆ど、疎な表現の数学的特性に関するものです。

But other parts of the HTM theory are less amenable to

formalism.

しかし、HTM

理論の他の部分は、形式主義にそれほど従順ではありません。

If you are looking for a succinct mathematical expression of intelligence, you won’t find it.

もしあなたが、知能の簡潔な数学的表式を探し求めても、みつからないでしょう。

In this

way, brain theory is more like genetic theory and less like physics.

かくして、脳の理論は、遺伝学により似ていて、物理学にはより似ていません。

●Historically, intelligence has been defined in behavioral terms.

歴史的に、知能は、行動の用語で定義されてきました。

For example, if a system can play chess, or drive a car, or answer questions from a human, then it is exhibiting intelligence.

例えば、もしシステムが、チェスをしたり、車を運転したり、人間からの質問に答えたりすれば、そのシステムは、知能を表示しています。

The Turing Test is the most famous example of this line of thinking.

チューリングテストは、この線の考え方の最も有名な例です。

We believe this approach to defining intelligence fails on two accounts.

私達は、信じます|このアプローチで知能を定義すると、二つの点で失敗することを|。

First, there are many examples of intelligence in the biological world that differ from human intelligence and would

fail most behavioral tests.

最初に、生物学の世界にも知能の沢山の例がありますが、それらは人間の知能とは違っていて、殆どの行動試験は通らないでしょう。

For example, dolphins, monkeys, and humans are all intelligent, yet only one of

these species can play chess or drive a car.

例えば、いるか、猿、人間は、みな知能を持っていますが、これらの種のなかで、チェスをしたり、車を運転できるのは、一つだけです。

Similarly, intelligent machines will span a range of capabilities from

mouse-like to super-human and, more importantly, we will apply intelligent machines to problems that have no

counterpart in the biological world.

同様に、知能機械は、マウスのような能力から、超人的能力まで広い範囲にまたがるでしょう。そして、もっと重要なことには、私達は、知能機械を、生物の世界には対応するもののいない問題に適用しようと思います。

Focusing on human-like performance is limiting.

人間のようなパフォーマンスに集中することは、窮屈です

●The second reason we reject behavior-based definitions of intelligence is that they don’t capture the incredible

flexibility of the neocortex.

私達が、行動を基礎において知能を定義することに反対する第二の理由は、新皮質の信じられないほどの柔軟性を捕えることができないからです。

The neocortex uses the same algorithms for all that it does, giving it flexibility that

has enabled humans to be so successful.

大脳新皮質は、それが行うすべてのことに、同じアルゴリズムを使います。それは、人類をこんなに成功させた柔軟性を新皮質に与えます。

Humans can learn to perform a vast number of tasks that have no

evolutionary precedent because our brains use learning algorithms that can be applied to almost any task.

人類は、学習することができます|多数の仕事を遂行することを|進化的に洗礼のない|。それは、私達の脳が、どんな仕事にも適用できる学習アルゴリズムを用いるからです。

The way the neocortex sees is the same as the way it hears or feels.

大脳新皮質が、視る方法は、聞いたり感じたりする方法と同じです。

In humans, this universal method creates language, science, engineering, and art.

人間において、この宇宙的な(万能な)方法は、言語や、科学や、工学や、芸術を創造しました。

When we define intelligence as solving specific tasks, such as playing

chess, we tend to create solutions that also are specific.

私達が、定義するとき|知能を|個別な仕事(たとえばチェスをするというような)を解決することとして|、私達は、個別な解決法を作りあげる傾向にあります。

The program that can win a chess game cannot learn to drive.

チェスのゲームに勝つことができるプログラムは、車を運転することはできません。

It is the flexibility of biological intelligence that we need to understand and embed in our intelligent

machines, not the ability to solve a particular task.

生物学的な知能の柔軟性こそが、私達が、理解して、知能機械に組み込むことが必要なものであって、個別の仕事を解く能力ではありません。

Another benefit of focusing on flexibility is network effects.

柔軟性に焦点を当てるもう一つの利点は、ネットワーク効果です。

The neocortex may not always be best at solving any particular problem, but it is very good at solving a huge

array of problems.

大脳新皮質は、任意の個別の問題に常に最適であるわけではありませんが、非常に広範の問題の解決が上手です。

Software engineers, hardware engineers, and application engineers naturally gravitate

towards the most universal solutions.

ソフト技術者も、ハード技術者も、応用技術者も、自然に、最も宇宙的な(万能な)解決法に引き寄せられます。

As more investment is focused on universal solutions, they will advance

faster and get better relative to other more dedicated methods.

投資がこの宇宙的な解決法に集中するにつれ、これら解決法は、より速くより良くなります|その他のより個別の方法と比較して|。

Network effects have fostered adoption many times in the technology world; this dynamic will unfold in the field of machine intelligence, too.

ネットワーク効果は、テクノロジーの世界で、何度も、アドプション(採択)を育てました。このダイナミクスは、機械知能の分野でも展開するでしょう。

●Therefore we define the intelligence of a system by the degree to which it exhibits flexibility: flexibility in

learning and flexibility in behavior.

それ故、私達は、定義します|あるシステムの知能を|それが柔軟性

(学習の柔軟性と行動の柔軟性) を示す程度によって|

Since the neocortex is the most flexible learning system we know of, we

measure the intelligence of a system by how many of the neocortical principles that system includes.

大脳新皮質は、私達が知っている最も柔軟な学習システムですので、私達は、測定します|あるシステムの知能を|そのシステムがいかに沢山の新皮質の原理を含むかによって|。

This book is an attempt to enumerate and understand these neocortical principles.

本書は、これら新皮質の原理を、列挙し理解する試みです。

Any system that includes all the principles we cover in this book will exhibit cortical-like flexibility, and therefore cortical-like intelligence.

この本でカバーするすべての原理を含有するシステムは、みな、新皮質のような柔軟性、そして、それ故に、新皮質のような知能、を示すでしょう。

By making systems larger or smaller and by applying them to different sensors and embodiments, we can create

intelligent machines of incredible variety.

システムを大きくしたり小さくしたりし、また、それらを様々なセンサーや、身体性具現化に適用することにより、私達は、信じられないくらい多様な知能機械を創造することができます。

Many of these systems will be much smaller than a human neocortex

and some will be much larger in terms of memory size, but they will all be intelligent.

これらのシステムの多くは、人間の新皮質よりもずつと小さいでしょう。いくつかは、記憶容量の点では、ずっと大きいでしょう。しかし、それらはみんな知能をもつでしょう。

●The structure of this book may be different from those you have read in the past.

本書の構造は、これまでにあなたが読んだ本とは違っているでしょう。

First, it is a “living book”.

最初に、本書は、「生きている本」です。

We are releasing chapters as they are written, covering first the aspects of the theory that are best understood.

私達は、各章をリリースします、それが書かれた時に|理論のアスペクト(外観)が最もよく理解できるようにカバーして|。

Some chapters may be published in draft form, whereas others will be more polished.

いくつかの章は、草稿の形で出版されるかもしれませんし、いくつかの章は、もっと推敲されているかもしれません。

For the foreseeable future this book will be a work in progress.

予測できるしばらくの間は、本書は、進行中の仕事です。

We have a table of contents for the entire book, but even this will

change as research progresses.

本全体の目次がありますが、これは、研究の進展によって変更されるでしょう。

●Second, the book is intended for a technical but diverse audience.

第二に、本書は、技術系ではあるけれど、多様な読者を対象とします。

Neuroscientists should find the book helpful as it provides a theoretical framework to interpret many biological details and guide experiments.

神経科学の研究者達も、本書を役立つと思うでしょう|本書は、理論的枠組みを提供して、多くの生物学的な詳細事を解釈し、実験を指導するので|。

Computer scientists can use the material in the book to develop machine intelligence hardware, software, and

applications based on neuroscience principles.

計算科学の研究者達は、この本の材料を使って、機械知能のハード・ソフト・応用を開発できます|神経科学の原理に基づいて|。

Anyone with a deep interest in how brains work or machine intelligence will hopefully find the book to be the best source for these topics.

脳がいかに働くかとか機械知能に深い興味を持っているみなさんに、本書が、これらの話題の最も良いソース(源泉)となることを願います。

Finally, we hope that academics and students will find this material to be a comprehensive introduction to an emerging and important field that

offers opportunities for future research and study.

最後に、アカデミー(大学研究機関 )の研究者や学生さんにも、この本の材料が、包括的な導入になることを期待します|将来の研究や学習の機会を与えてくれる今出現中の重要な分野の|。

●The structure of the chapters in this book varies depending on the topic.

本書の章の構造は、トピックによって変化しています。

Some chapters are overview in nature.

いくつかの章は、性格上、概論です。

Some chapters include mathematical formulations and problem sets to exercise the reader’s

knowledge.

いくつかの章は、数式や、読者の知識を訓練する問題セットを含んでいます。

Some chapters include pseudo-code.

いくつかの章は、擬似のプログラム・コードを含みます。

Key citations will be noted, but we do not attempt to have a

comprehensive set of citations to all work done in the field.

主要な引用は、明示しますが、この分野でなされているすべての研究の包括的な引用は、試みません。

As such, we gratefully acknowledge the many pioneers whose work we have built upon who are not explicitly mentioned.

ですから、私達は、ありがたく感謝します|多くのパイオニア達に|彼らの仕事に依拠したけれども、明確には言及していない|。

●We are now ready to jump into the details of biological and machine intelligence.

私達は、いま、生物学的な知能と機械知能の詳細に飛び込む準備が整いました。

2016.9.22 更新2016.9.24

http://numenta.com/assets/pdf/biological-and-machine-intelligence/0.4/BaMI-HTM-Overview.pdf

●In the September 1979 issue of Scientific American, Nobel Prize-winning scientist Francis Crick wrote about the

state of neuroscience.

Scientific American の1979年9月号に、ノーベル賞受賞科学者のフランシス・クリックが、神経科学の現状について書きました。

He opined that despite the great wealth of factual knowledge about the brain we had

little understanding of how it actually worked.

彼は意見を述べました|脳に関する事実の知識が非常に沢山あるにもかかわらず、農が実際にどう働くかについての知識は、殆ど無いと|。

His exact words were, “What is conspicuously lacking is a broad

framework of ideas within which to interpret all these different approaches” (Crick, 1979).

彼の正確な言葉は、「明瞭に欠けているものは、です|幅広い枠組みのアイデア|これら様々なアプローチのすべてを解釈できる|。」

Hierarchical

Temporal Memory (HTM) is, we believe, the broad framework sought after by Dr. Crick.

HTMこそ、私は信じます、クリック博士が求めた幅広い枠組みです。

More specifically, HTM

is a theoretical framework for how the neocortex works and how the neocortex relates to the rest of the brain

to create intelligence.

もっといいますと、HTM

理論は、です|理論的な枠組み|大脳新皮質がいかに働き、大脳新皮質がいかに脳の残りの部分と関係して知能を生み出すのかの|。

HTM is a theory of the neocortex and a few related brain structures; it is not an attempt

to model or understand every part of the human brain.

HTM

は、新皮質といくっかの関係する脳構造の理論です;人間の脳のすべての部分をモデル化し理解する試みではありません。

The neocortex comprises about 75% of the volume of

the human brain, and it is the seat of most of what we think of as intelligence.

新皮質は、人間の脳の約75%の体積を占めます。そして、私達が知能と考えるものの殆どの場所となつています。

It is what makes our species

unique.

新皮質こそが、私達人類を、唯一無二のものにしています。

●HTM is a biological theory, meaning it is derived from neuroanatomy and neurophysiology and explains how

the biological neocortex works.

HTM

は、生物学的理論です。そのことは、神経解剖学や神経生理学から由来し、生物学的な新皮質がいかに働くかを説明することを意味します。

We sometimes say HTM theory is “biologically constrained,” as opposed to

“biologically inspired,” which is a term often used in machine learning.

私達は、時々、HTM

理論は「生物学的制約を受けている」と言います。「生物学的に触発されて」と対抗して。これは、機械学習でよく使われる用語です。

The biological details of the neocortex

must be compatible with the HTM theory, and the theory can’t rely on principles that can’t possibly be

implemented in biological tissue.

新皮質の生物学的詳細は、HTM

理論と両立しなければなりません。そして、HTM

理論は、生物学的組織では実行できそうもない原理に依拠することはできません。

For example, consider the pyramidal neuron, the most common type of

neuron in the neocortex.

例えば、新皮質の中で、ニューロンの最も普通なタイプである、錐体ニューロンを考えてみなさい。

Pyramidal neurons have tree-like extensions called dendrites that connect via

thousands of synapses.

錐体ニューロンは、デンドライト(樹状突起)と呼ばれる樹状のエクステンションを持っていて、何千ものシナプスと接続しています。

Neuroscientists know that the dendrites are active processing units, and that

communication through the synapses is a dynamic, inherently stochastic process (Poirazi and Mel, 2001).

神経科学の研究者は、知っています|デンドライトが活動的処理ユニットであり、シナプスを通した通信は、動的で本質的な確率過程であるということを|。

The

pyramidal neuron is the core information processing element of the neocortex, and synapses are the substrate

of memory.

錐体ニューロンは、新皮質の中核的情報処理要素であり、シナプスは、記憶の

substrate (基質、低質) です。

Therefore, to understand how the neocortex works we need a theory that accommodates the

essential features of neurons and synapses.

それ故、新皮質がいかにして働くかを理解するためには、私達は、ニューロンとシナプスの本質的な特徴を許容し説明する理論が必要です。

Artificial Neural Networks (ANNs) usually model neurons with no

dendrites and few highly precise synapses, features which are incompatible with real neurons.

人工ニューラルネット (ANN)

は、通常、デンドライト(樹状突起)がなく、高度に正確なシナプスを殆どもたないニューロンをモデル化します。これは、実際のニューロンとは両立しません。

This type of

artificial neuron can’t be reconciled with biological neurons and is therefore unlikely to lead to networks that

work on the same principles as the brain.

このタイプの人工ニューロンは、生物学的ニューロンと合わせることはできません。また、脳と同じ原理で働くネットワークに導かれることもありそうでありません。

This observation doesn’t mean ANNs aren’t useful, only that they

don’t work on the same principles as biological neural networks.

こう言っても、ANNが役立たないと言っているのではなく、ただ、生物学的ニューラルネットと同じ原理では働かないと言っているのです。

As you will see, HTM theory explains why

neurons have thousands of synapses and active dendrites.

お解りになるように、HTM理論は、何故、ニューロンは、何千ものシナプスと活動的デンドライトを持つのか説明します。

We believe these and many other biological features

are essential for an intelligent system and can’t be ignored.

私達は、信じます|これらのことやその他沢山の生物学的特性は、知能システムにとって本質的で、無視できないことを|。

Figure 1 Biological and artificial neurons. 図1 生物学的ニューロンと、人工ニューロン

●Figure 1a shows an artificial neuron typically used in machine learning and artificial

neural networks.

図1aは、示します|人工ニューロンを|機械学習や人工ニューラルネットで典型的に使用される|。

Often called a “point neuron” this form of artificial neuron has relatively few synapses and no dendrites.

しばしば「点ニューロン」と呼ばれ、このタイプの人工ニューロンは、シナプスを殆ど持たず、デンドライトを持ちません。

Learning in a point neuron occurs by changing the “weight” of the synapses which are represented by a scalar value that can

take a positive or negative value.

点ニューロンにおける学習は、起こります|シナプスの重みを変えることにより|正や負の値をとることができるスカラー値によって表現される|。

A point neuron calculates a weighted sum of its inputs which is applied to a non-linear

function to determine the output value of the neuron.

点ニューロンは、計算します|入力の重み付き総和を|ニューロンの出力値を決定する非線形関数に適用して|。

Figure 1b shows a pyramidal neuron which is the most common type of

neuron in the neocortex.

図1bは、示します|錐体ニューンを|新皮質内のニューロンの最も普通なタイプである|。

Biological neurons have thousands of synapses arranged along dendrites.

生物学的ニューロンは、何千ものトナプスをデンドライトに沿って配置しています。

Dendrites are active

processing elements allowing the neuron to recognize hundreds of unique patterns.

デンドライトは、活動的処理エレメントで、ニューロンが何百もの特異なパターンを認識するのを可能にします。

Biological synapses are partly stochastic

and therefore are low precision.

生物学的シナプスは、部分的に確率論的で、それ故、精度は高くありません。

Learning in a biological neuron is mostly due to the formation of new synapses and the

removal of unused synapses.

生物学的ニューロン内の学習は、多くの場合、新しいシナプスの形成と、使われないシナプスの除去によります。

Pyramidal neurons have multiple synaptic integration zones that receive input from different

sources and have differing effects on the cell.

錐体ニューンは、多重シナプス積分領域を持ち、様々なソースからの入力を受け取り、細胞にそれぞれ違う効果を与えます。

Figure 1c shows an HTM artificial neuron.

図1c は、HTM人工ニューロンを示します。

Similar to a pyramidal neuron it has

thousands of synapses arranged on active dendrites.

錐体ニューンと同様に、HTMニューロンは、何千ものトナプスを持ち、活動的デンドライトの上に配置されています。

It recognizes hundreds of patterns in multiple integration zones.

それは、認識します|何百ものパターンを|多重積分領域において|。

The

HTM neuron uses binary synapses and learns by modeling the growth of new synapses and the decay of unused synapses.

HTMニューロンは、二値のシナプスを使用し、新しいシナプスの成長や使われないシナプスの崩壊をモデル化して学習します。

HTM neurons don’t attempt to model all aspects of biological neurons, only those that are essential for information theoretic

aspects of the neocortex.

HTMニューロンは、試みません|生物学的ニューロンの全ての面のモデル化を|。新皮質の情報科学的に本質的なもののみをモデル化します。

●Although we want to understand how biological brains work, we don’t have to adhere to all the biological

details.

私達は、生物学的脳がいかに働くかを理解したいと思いますが、生物学的詳細のすべてに執着する必要はありません。

Once we understand how real neurons work and how biological networks of neurons lead to memory

and behavior, we might decide to implement them in software or in hardware in a way that differs from the

biology in detail, but not in principle.

一旦、私達が、理解すると|実際のニューロンがいかに働き、ニューロンの生物学的なネットワークがいかに記憶や行動を導くかを|、私達は、試みるでしょう|それらを実装することを|ソフトウェアやハードウェアにおいて|生物学的に詳細には異なっているが、原理的には異なっていない方法で|。

But we shouldn’t do that before we understand how biological neural

systems work.

しかし、私達は、それをすべきではありません。私達が生物学的なニュラル・システムがいかに働くかを理解しないうちは。

People often ask, “How do you know which biological details matter and which don’t?”

人々はしばしば尋ねます。「生物学的な詳細のどの部分が係っていて、どの部分が係っていないか、どうしてわかるのですか?」と。

The

answer is: once you know how the biology works, you can decide which biological details to include in your

models and which to leave out, but you will know what you are giving up, if anything, if your software model

leaves out a particular biological feature.

答えはこうです:一旦あなたが、生物学がいかに働くかがわかれば、生物学的な詳細のどの部分をあなたのモデルに含み、どの部分を除外するか決定することができるし、もし、あなたのソフトウェアモデルがある生物学的特性を除外するようなことが、もしあったときは、あなたは、何をあきらめているのかは、理解できるでしょう。

Human brains are just one implementation of intelligence; yet today,

humans are the only things everyone agrees are intelligent.

人間の脳は、知能の一つの実装にすぎません;

それでも、今日、人間は、知能的であるとみんなが認める唯一のものです。

Our challenge is to separate aspects of brains and

neurons that are essential for intelligence from those aspects that are artifacts of the brain’s particular

implementation of intelligence principles.

私達の挑戦は、です|分離すること|知能に本質的な脳とニューロンのアスペクト(面)を、脳が実装した知能原理のアーティファクト(作り事)であるアスペクトから|。

Our goal isn’t to recreate a brain, but to understand how brains work

in sufficient detail so that we can test the theory biologically and also build systems that, although not identical

to brains, work on the same principles.

私達の目標は、脳を再作成することではなく、脳がいかに働くかを十分詳細に理解し、理論を生物学的にテストし、脳とは同一ではないけれども、同じ原理のもとに働くシステムを構築することです。

●Sometime in the future designers of intelligent machines may not care about brains and the details of how

brains implement the principles of intelligence.

未来のある時点で、知能機械の設計者は、脳について、そして、脳がいかに知能原理を実装するかの詳細について、気にかけないでしょう。

The field of machine intelligence may by then be so advanced

that it has departed from its biological origin.

機械知能の分野は、その時、ずっと前進していて、生物学的起源からは離れてしまっているかもしれません。

But we aren’t there yet.

しかし、私達は、まだそこに到っていません。

Today we still have much to learn from

biological brains and therefore to understand HTM principles, to advance HTM theory, and to build intelligent

machines, it is necessary to know neuroscience terms and the basics of the brain’s design.

今日、私達は、生物学的脳から学ぶべきことを沢山持っています。それ故、HTM原理を理解し、HTM理論を前進させ、知能機械を造るために、神経科学の用語と、脳の設計の基礎を知ることが必要です。

●Bear in mind that HTM is an evolving theory.

心に留めおいてください。HTMは、進化中の理論です。

We are not done with a complete theory of neocortex, as will

become obvious in the remainder of this chapter and the rest of the book.

私達は、新皮質の理論をまだ完成していません|この章の残りの部分や、本書の残りの部分で明らかになるように|。

There are entire sections yet to be

written, and some of what is written will be modified as new knowledge is acquired and integrated into the

theory.

まだ全体が書かれていない節もあります。書かれたもののいくつかも、新知識が得られて、理論に組み込まれるにつれ、修正されるでしょう。

The good news is that although we have a long way to go for a complete theory of neocortex and

intelligence, we have made significant progress on the fundamental aspects of the theory.

良いニュースは、新皮質や知能の完全な理論にはまだ長い道のりがあるにもかかわらず、理論の基本的な面において、顕著な進展を得ています。

The theory includes

the representation format used by the neocortex (“sparse distributed representations” or SDRs), the

mathematical and semantic operations that are enabled by this representation format, and how neurons

throughout the neocortex learn sequences and make predictions, which is the core component of all inference

and behavior.

理論は、含みます|新皮質が使用する表現のフォーマット

(疎分布の表現)、この表現フォーマットによって可能となる数学的・意味論的操作、そして、新皮質中のニューロンが、いかにしてシークエンスを学習し予測を行うか

(これは、すべての推測や行動の中核的成分です) を|。

We also understand how knowledge is stored by the formation of sets of new synapses on the

dendrites of neurons.

私達は、また、理解しています|ニューロンの樹状突起の上に新しいシナプスの組が形成されることにより知識が貯えられることを|。

These are basic elements of biological intelligence analogous to how random access

memory, busses, and instruction sets are basic elements of computers.

これらは、生物学的知能の基本要素です|RAMや、バス(計算機内部の共用伝送路)やインストラクションセットが計算機の基本要素であることと類似して|。

Once you understand these basic

elements, you can combine them in different ways to create full systems.

一旦あなたが、これらの基礎要素を理解すると、あなたは、様々な方法でそれらを組み合わせて、完全なシステムを造ることができます。

●The remainder of this chapter introduces the key concepts of Hierarchical Temporal Memory.

この章の残りは、HTMの主要概念を紹介します。

We will describe

an aspect of the neocortex and then relate that biological feature to one or more principles of HTM theory.

私達は、新皮質の一つの面を記述し、その生物学的特性をHTM理論のいくつかの原理に関係付けます。

In-depth

descriptions and technical details of the HTM principles are provided in subsequent chapters.

HTM原理の掘り下げた記述や技術的詳細は、後続の章で提供します。

●The human brain comprises several components such as the brain stem, the basal ganglia, and the cerebellum.

人間の脳は、成ります|いくつかの成分から|脳幹、基底核、小脳などの|。

These organs are loosely stacked on top of the spinal cord.

これらの器官は、脊髄の最上部にゆるく積み重ねられています。

The neocortex is just one more component of the

brain, but it dominates the human brain, occupying about 75% of its volume.

新皮質は、大脳のもう一つの成分ですが、人間の脳で卓越し、体積で約

75% を占めます。

The evolutionary history of the

brain is reflected in its overall design.

脳の進化の歴史が、その全体のデザインに反映されています。

Simple animals, such as worms, have the equivalent of the spinal cord

and nothing else.

蠕虫のような単純な動物には、脊髄と等価なものはありますが、ほかのものはありません。

The spinal cord of a worm and a human receives sensory input and generates useful, albeit

simple, behaviors.

蠕虫と人間の脊髄は、感覚入力を受け取り、単純ではあるが有用な行動を生みます。

Over evolutionary time scales, new brain structures were added such as the brainstem and

basal ganglia.

進化の時間スケール上では、新しい脳の構造は、脳幹や基底核のように付加されます。

Each addition did not replace what was there before.

付加は、そこに以前あったものを置きかえることはしませんでした。

Typically the new brain structure received

input from the older parts of the brain, and the new structure’s output led to new behaviors by controlling the

older brain regions.

典型的に、新しい脳構造は、脳の古い部分から入力を受け取りました。この新しい構造からの出力は、脳の古い部分を制御しながら、新しい行動に導きました。

The addition of each new brain structure had to incrementally improve the animal’s

behaviors.

新しい脳構造の付加は、動物の行動を徐々に改良しました。

Because of this evolutionary path, the entire brain is physically and logically a hierarchy of brain

regions.

この進化の道の故に、全体の脳は、物理的にも論理的にも、脳領域の階層構造です。

Figure 2 a) real brain b) logical hierarchy (placeholder) 図2

●The neocortex is the most recent addition to our brain.

新皮質は、最も最近に脳に付加されたものです。

All mammals, and only mammals, have a neocortex.

すべての哺乳類が、そして哺乳類のみが、新皮質を持ちます。

The

neocortex first appeared about 200 million years ago in the early small mammals that emerged from their

reptilian ancestors during the transition of the Triassic/Jurassic periods.

新皮質は、最初に現れました、200M年前に、初期の小さな哺乳類に|先祖の爬虫類から出現した|三畳紀からジュラ紀への遷移期の間に|。

The modern human neocortex

separated from those of monkeys in terms of size and complexity about 25 million years ago (Rakic, 2009).

現代の人類の新皮質は、分離しました|猿のそれから|大きさや複雑さにおいて|約25M年前に|。

The

human neocortex continued to evolve to be bigger and bigger, reaching its current size in humans between

800,000 and 200,000 years ago.

人類の新皮質は、進化を続けました|より大きくより大きくなるように|、そして到達しました|人間の現在のサイズに|。

In humans, the neocortex is a sheet of neural tissue about the size of a large

dinner napkin (1,000 square centimeters) in area and 2.5mm thick.

人類において、新皮質は、です|神経組織のシート|面積が大きなディナー用ナプキン(1000平方センチ)で厚さ2.5mmの|。

It lies just under the skull and wraps around

the other parts of the brain.

それは、頭蓋骨の下に置かれ、脳の他の部分の周りにくるまります。

(From here on, the word “neocortex” will refer to the human neocortex.

以後、新皮質は、人間の新皮質のことを指します。

References

to the neocortex of other mammals will be explicit.)

ほかの哺乳類の新皮質の場合は、明示します。

The neocortex is heavily folded to fit in the skull but this

isn’t important to how it works, so we will always refer to it and illustrate it as a flat sheet.

新皮質は、激しく畳み込まれて、頭蓋骨の中に収まります。しかし、このことは、それがどう働くかにとっては重要ではありません。そこで、私達は、常に、それについて言及したり説明したりします|平らなシートとして|。

The human neocortex

is large both in absolute terms and also relative to the size of our body compared to other mammals.

人類の新皮質は、大きさ自身でも大きいのですが、体のサイズと比較しても他の哺乳類よりおおきいです。

We are an

intelligent species mostly because of the size of our neocortex.

私達は、知能的な種です|新皮質のサイズの故に|。

●The most remarkable aspect of the neocortex is its homogeneity.

新皮質の最も特筆すべき点は、その均質性です。

The types of cells and their patterns of

connectivity are nearly identical no matter what part of the neocortex you look at.

細胞のタイプや、接続性のパターンは、ほぼ同一です|新皮質のどの部分を見ても|。

This fact is largely true across

species as well.

この事実は、おおむね、真です|どの種をみても同様に|。

Sections of human, rat, and monkey neocortex look remarkably the same.

セクション(部分)|人間と、ラットと、猿の新皮質の|、は、驚くほど同じに見えます。

The primary

difference between the neocortex of different animals is the size of the neocortical sheet.

種々の動物の新皮質間の主要な違いは、新皮質のシートのサイズです。

Many pieces of

evidence suggest that the human neocortex got large by replicating a basic element over and over.

多くの証拠片は、示唆します|人間の新皮質は、基本要素を何度も何度も複製することにより大きくなったことを|。

This

observation led to the 1978 conjecture by Vernon Mountcastle that every part of the neocortex must be doing

the same thing.

この観測は、導きました|1978年の憶測を|Vernon

Mountcastleによる|新皮質のすべての部分は同じ事をしているに違いないという|。

So even though some parts of the neocortex process vision, some process hearing, and other

parts create language, at a fundamental level these are all variations of the same problem, and are solved by

the same neural algorithms.

そこで、たとえ、[新皮質のある部分は視覚を処理し、ある部分は聴覚を処理し、ある部分は言語を作ったとしても]|根本的なレベルにおいて|、これらは、すべて同じ問題の変形で、同じ神経アルゴリズムで解かれるのです。

Mountcastle argued that the vision regions of the neocortex are vision regions

because they receive input from the eyes and not because they have special vision neurons or vision algorithms

(Mountcastle, 1978).

Mountcastleは、議論しました|新皮質の視覚の領域は、視覚の領域ですと|それが眼からの信号を受け取るという理由からであって、視覚ニューロンや視覚アルゴリズムを持っているという理由ではなく|。

This discovery is incredibly important and is supported by multiple lines of evidence.

この発見は、信じられないほど重要で、多重のラインの証拠から支持されています。

●Even though the neocortex is largely homogenous, some neuroscientists are quick to point out the differences

between neocortical regions.

たとえ、新皮質がおおむね均質だとしても、何人かの神経科学者は、すぐに、新皮質の領域間の違いを指摘します。

One region may have more of a certain cell type, another region has extra layers,

and other regions may exhibit variations in connectivity patterns.

ある領域は、あるタイプの細胞をより沢山持っていて、別の領域は、特別な層を持っていて、また別の領域は、接続性パターンに変化を示すようだと。

But there is no question that neocortical

regions are remarkably similar and that the variations are relatively minor.

しかし、問題はありません|新皮質の領域は驚くべきほど同様で、変化は比較的にマイナー(小さい)であることに|。

The debate is only about how critical

the variations are in terms of functionality.

論争点は、この変化が機能性からみてどれくらい重大であるかに関するもののみです。

●The neocortical sheet is divided into dozens of regions situated next to each other.

新皮質のシートは、互いに隣接しあう多数の領域に分割されます。

Looking at a neocortex you

would not see any regions or demarcations.

新皮質を見ても、あなたは、領域や境界は見えないでしょう。

The regions are defined by connectivity.

領域は、接続性によって定義されます。

Regions pass information to each other by sending bundles of nerve fibers into the white matter just below the neocortex.

The nerve fibers reenter at another neocortical region.

The connections between regions define a logical hierarchy.

領域間の接続は、論理的な階層性を定義します。

Information from a sensory organ is processed by one region, which passes its output to another region, which

passes its output to yet another region, etc.

感覚器官からの情報は、ある領域で処理され、その出力は別の領域に渡され、その出力はさらに別の領域に渡され、等々。

The number of regions and their connectivity is determined by our

genes and is the same for all members of a species.

領域の数や接続性は、私達の遺伝子によって決定されていて、種のすべてのメンバーで同じです。

So, as far as we know, the hierarchical organization of each

human’s neocortex is the same, but our hierarchy differs from the hierarchy of a dog or a whale.

そこで、私達の知る限り、人間の各人の新皮質の階層組織も同じです。しかし、私達の階層性は、犬や鯨の階層性とは異なります。

The actual

hierarchy for some species has been mapped in detail (Zingg, 2014).

いくつかの種の実際の階層性は、詳しくマップされています。

They are complicated, not like a simple

flow chart.

それらは、複雑で、簡単なフローチャートのようなものではありません。

There are parallel paths up the hierarchy and information often skips levels and goes sideways

between parallel paths.

階層を昇る平行な道があり、情報はしばしば、レベルをとばしたり、平行な道を横に進んだりします。

Despite this complexity the hierarchical structure of the neocortex is well established.

この複雑性にもかかわらず、新皮質の階層構造は、十分確立されています。

●We can now see the big picture of how the brain is organized.

私達は、今、脳がいかに組織されているかの大きな絵を見る事が出来ます。

The entire brain is a hierarchy of brain regions,

where each region interacts with a fully functional stack of evolutionarily older regions below it.

脳全体は、脳の各領域の階層構造です。各領域は、その下に進化的により古い領域が完全に機能的に積み重ななって相互作用しています。

For most of

evolutionary history new brain regions, such as the spinal cord, brain stem, and basal ganglia, were

heterogeneous, adding capabilities that were specific to particular senses and behaviors.

進化の歴史の大部分において、新しい脳の領域

(脊髄、脳幹、基底核など)

は、異質で、個々の感覚や行動に固有な能力を付加しました。

This evolutionary

process was slow.

この進化過程は、ゆっくりです。

Starting with mammals, evolution discovered a way to extend the brain’s hierarchy using new

brain regions with a homogenous design, an algorithm that works with any type of sensor data and any type of

behavior.

哺乳類から始めて、進化は、発見しました|脳の階層性を拡張する方法を|均質なデザインと、任意の型の感覚データや任意の型の行動に働くアルゴリズムを持った新しい脳の領域を使用して|。

This replication is the beauty of the neocortex.

Once the universal neocortical algorithms were

established, evolution could extend the brain’s hierarchy rapidly because it only had to replicate an existing

structure.

この宇宙的な(万能な)新皮質のアルゴリズムが、一旦、確立されるや、進化は脳の階層性を急速に拡張することができました。何故なら、それは、既存の構造を複製するだけでよかったので。

This explains how human brains evolved to become large so quickly.

これは、いかにして人間の脳が、こんなに急速に大きくなったのかを説明します。

Figure 3 a) brain with information flowing posterior to anterior b) logical hierarchical stack showing old brain regions and neocortical regions (placeholder) 図3

●Sensory information enters the human neocortex in regions that are in the rear and side of the head.

感覚情報は、人間の新皮質に、頭の後ろと横にある領域から入ります。

As

information moves up the hierarchy it eventually passes into regions in the front half of the neocortex.

情報が階層をあがっていくにつれ、最後には、新皮質の前半分の領域に入ります。

Some of the regions at the very top of the neocortical hierarchy, in the frontal lobes and also the hippocampus, have unique properties such as the ability for short term memory, which allows you to keep a phone number in your head for a few minutes.

These regions also exhibit more heterogeneity, and some of them are older than the

neocortex.

これらの領域は、より多くの異質性を示します。そして、そのいくつかは、新皮質よりも古いものです。

The neocortex in some sense was inserted near the top of the old brain’s hierarchical stack.

新皮質は、ある意味、古い脳の階層性の積み重ねの頂上近くに挿入されました。

Therefore as we develop HTM theory, we first try to understand the homogenous regions that are near the

bottom of the neocortical hierarchy.

それ故、私達が、HTM

理論を発展させるにつれ、私達はまず試みます|新皮質の階層性の底部近くにある均質な領域を理解することを|。

In other words, we first need to understand how the neocortex builds a

basic model of the world from sensory data and how it generates basic behaviors.

言い換えると、私達は、まず必要です|理解することが|いかに新皮質が感覚データから世界のモデルを作り、いかに基本行動を生成するかを|。

●HTM theory focuses on the common properties across the neocortex.

HTM理論は、大脳新皮質を通しての共通特性に焦点を当てます。

We strive not to understand vision or

hearing or robotics as separate problems, but to understand how these capabilities are fundamentally all the

same, and what set of algorithms can see AND hear AND generate behavior.

私達は、努めます|視覚と聴覚とロボット工学を別個の問題とは考えないように|。しかし、努めます|いかにこれらの能力が根本的に同じで、どのセットのアルゴリズムが、見て

AND 聴いて AND

行動を生むことができるのかを理解しようと|。

Initially, this general approach

makes our task seem harder, but ultimately it is liberating.

最初、この一般的なアプローチは、このタスクがより難しく見えるようにしますが、最終的には、それは解放的です。

When we describe or study a particular HTM

learning algorithm we often will start with a particular problem, such as vision, to understand or test the

algorithm.

私達が個々のHTM

学習アルゴリズムを記述し学習するときに、いばしば、個々の問題

(例えば、視覚)

で始めて、アルゴリズムを理解し試験します。

But we then ask how the exact same algorithm would work for a different problem such as

understanding language.

しかし、次に、私達は、その同じアルゴリズムが、言語理解のような別の問題で、どのように働くかを尋ねます。

This process leads to realizations that might not at first be obvious, such as vision

being a primarily temporal inference problem, meaning the temporal order of patterns coming from the retina

is as important in vision as is the temporal order of words in language.

この過程は、当初は明確でない認識に導きます。例えば、視覚が、第一に、時間的推測問題であるというような。これは、網膜から来るパターンの時間的順番が視覚において重要なのは、言語において言葉の時間的順序が重要なのと同じです。

Once we understand the common

algorithms of the neocortex, we can ask how evolution might have tweaked these algorithms to achieve even

better performance on a particular problem.

一旦、私達が新皮質の共通アルゴリズムを理解すると、私達は、尋ねることができます|いかに進化がこれらのアルゴリズムを微調整して、個々の問題へのよりよいパフォーマンスを成し遂げることができたのかと|。

But our focus is to first understand the common algorithms that

are manifest in all neocortical regions.

しかし、私達の焦点は、まず、新皮質のすべての領域に明白な共通アルゴリズムを理解することです。

●Every neocortex, from a mouse to a human, has a hierarchy of regions, although the number of levels and

number of regions in the hierarchy varies.

マウスから人間まで、すべての新皮質は、領域の階層性を持ちます。しかし、階層性のレベルの数や領域の数は変化します。

It is clear that hierarchy is essential to form high-level percepts of

the world from low-level sensory arrays such as the retina or cochlea.

明白です|階層性が本質的であることは|網膜や蝸牛のような低レベルの感覚アレイから高レベルの世界の知覚を形成するために|。

As its name implies, HTM theory

incorporates the concept of hierarchy.

名前が暗示するように、HTM

理論は、階層性の概念を包含します。

Because each region is performing the same set of memory and

algorithmic functions, the capabilities exhibited by the entire neocortical hierarchy have to be present in each

region.

各領域は、同じセットの記憶やアルゴリズム機能を実施しますので、新皮質の階層性の全体が示す能力は、各領域に存在しなければなりません。

Thus if we can understand how a single region works and how that region interacts with its hierarchical

neighbors, then we can build hierarchical models of indefinite complexity and apply them to any type of

sensory/motor system.

かくして、もし私達が、いかにある一つの領域が働き、いかにその領域が隣の階層と相互作用するかを理解できるなら、その時、私達は、無限の複雑さの階層モデルを構築し、それを任意のタイプの感覚/運動システムに適用できます。

Consequently most of current HTM theory focuses on how a single neocortical region

works and how two regions work together.

その結果、現在のHTM理論の殆どは、いかに単一の新皮質領域が働き、いかに二つの領域が一緒に働くかに集中しています。

●The neocortex is made up of neurons.

新皮質はニューロンから成ります。

No one knows exactly how many neurons are in a human neocortex, but

recent “primate scale up” methods put the estimate at 86 billion (Herculano-Houzel, 2012).

誰も正確には、人間の新皮質にどれだけ沢山のニューロンがあるか知りません。しかし、最近の「霊長類スケールアップ」法は、その見積もりを、860億としました。

The moment-to-moment

state of the neocortex, some of which defines our perceptions and thoughts, is determined by which

neurons are active at any point in time.

刻一刻状態の新皮質 (そのいくつかは、我々の感覚や思考を定義します)

は、どのニューロンが任意の時点でアクティブかによって決定されます。

An active neuron is one that is generating spikes, or action potentials.

アクティブなニューロンは、スパイク、すなわち、活動ポテンシャル、を生成するニューロンです。

One of the most remarkable observations about the neocortex is that no matter where you look, the activity of

neurons is sparse, meaning only a small percentage of them are rapidly spiking at any point in time.

新皮質に関する最も特筆すべき観測は、あなたがどこを見ても、ニューロンの活動は疎だということです。これは、それらのうち微少のパーセンテージしか、任意の時間に高速にスパイクしていないことを意味しています。

The

sparsity might vary from less than one percent to several percent, but it is always sparse.

疎の程度は、1%以下から数%まで変動しますが、常に疎です。

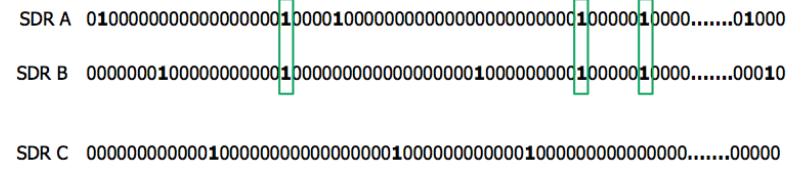

●The representations used in HTM theory are called Sparse Distributed Representations, or SDRs.

HTM 理論で使われる表現は、疎分布表現 (SDR)

と呼ばれています。

SDRs are

vectors with thousands of bits.

SDRは、何千ものビットからなるベクトルです。

At any point in time a small percentage of the bits are 1’s and the rest are 0’s.

任意の時点で、少ないパーセントのビットが"1"で、残りは"0"です。

HTM theory explains why it is important that there are always a minimum number of 1’s distributed in the

SDR,

and also why the percentage of 1’s must be low, typically less than 2%.

HTM 理論は、説明します|いつも最少数の"1"がSDRに分布していることが何故重要なのか、そして、何故1のパーセントが小さくなければならないか

(典型的には2%以下)を|。

The bits in an SDR correspond to the

neurons in the neocortex.

SDRのビットは、新皮質中のニューロンに対応します。

●SDRs have some essential and surprising properties.

SDRは、いくつかの本質的で驚くべき特性を持っています。

For comparison, consider the representations used in

programmable computers.

比較のために、プログラム可能コンピュータに使われる表現を考えましょう。

The meaning of a word in a computer is not inherent in the word itself.

コンピュータにおいて単語の意味は、単語自身の中に生得的ではありません。

If you were

shown 64 bits from a location in a computer’s memory you couldn’t say anything about what it represents.

もし、あなたが、コンピュータのメモリーのある位置からビット見せられても、それが何を意味するかについて何も言えません。

At

one moment in the execution of the program the bits could represent one thing and at another moment they

might represent something else, and in either case the meaning of the 64 bits can only be known by relying on

knowledge not contained in the physical location of the bits themselves.

プログラムの実行中のある瞬間、64ビットは、一つのことを表し、別の瞬間には、別のことを表し、どちらの場合にも、64ビットは、ビット自身の物理位置に含まれない知識に頼ることでのみ分かるのです。

With SDRs, the bits of the

representation encode the semantic properties of the representation; the representation and its meaning are

one and the same.

SDR

で、表現のビットは、表現の意味論的特性をエンコードします。表現とその意味は、一つでかつ同じです。

Two SDRs that have 1 bits in the same location share a semantic property.

同じ位置に1 ビットを持つ二つのSDRは、意味論的特性を共有します。

The more 1 bits

two SDRs share, the more semantically similar are the two representations.

二つのSDR が、もっと1

ビットを共有すれば、二つのSDR

は、意味論的にさらに同様です。

The SDR explains how brains make

semantic generalizations; it is an inherent property of the representation method.

SDR

は、いかにして脳が意味論的一般化を行うのかを説明します。それは、表現法の生来の特性です。

Another example of a unique

capability of sparse representations is that a set of neurons can simultaneously activate multiple

representations without confusion.

疎な表現の特異な能力のもう一つの例は、一組のニューロンは、混乱無く多重表現を同時に活性化できることです。

It is as if a location in computer memory could hold not just one value but

twenty simultaneous values and not get confused!

それは、まるで、コンピュータ・メモリー内の位置が、一つの値だけでなく、20個の値を同時に保持して、混乱しないということです。

We call this unique characteristic the “union property” and it

is used throughout HTM theory for such things as making multiple predictions at the same time.

私達は、この特異な特徴を「ユニオン・プロパティ」と呼びます。それは、HTM理論の中で、多重予測を同時に行うというような問題においてしようされています。

●The use of sparse distributed representations is a key component of HTM theory.

疎分布表現の使用は、HTM

理論の主要成分です。

We believe that all truly

intelligent systems must use sparse distributed representations.

私達は、すべての真に知能的なトステムは、疎分布表現を使用しなければならないと信じます。

To become facile with HTM theory, you will

need to develop an intuitive sense for the mathematical and representational properties of

SDRs.

HTM 理論に堪能になるためには、あなたは、SDRの数学的・表現的特性についての直観を開発する必要があります。

●As mentioned earlier, the neocortex appeared recently in evolutionary time.

以前にお話ししたように、新皮質は、進化の時間では、つい最近登場しました。

The other parts of the brain

existed before the neocortex appeared.

脳の他の部分は、新皮質が現れる前に存在しました。

You can think of a human brain as consisting of a reptile brain (the old

stuff) with a neocortex (literally “new layer”) attached on top of it.

人間の脳は、爬虫類の脳 (古物)

とその上にくっつけられた新皮質 (文字通り「新しい層」)

から成ると考えることが出来ます。

The older parts of the brain still have the

ability to sense the environment and to act.

脳の古い部分は、今なお、環境を知覚し行動する能力を持ちます。

We humans still have a reptile inside of us.

私達人類は、今なお、体内に、爬虫類を持ちます。

The neocortex is not a

stand-alone system, it learns how to interact with and control the older brain areas to create novel and

improved behaviors.

新皮質は、スタンド・アローンな(独立した)システムではありません。それは、いかに脳の古い領域と相互作用し、コントロールするかを学習し、新奇で改良された行動を生み出すことができます。

●There are two basic inputs to the neocortex. One is data from the senses.

新皮質には、二つの基本的な入力があります。一つは、感覚からのデータです。

As a general rule, sensory data is

processed first in the sensory organs such as the retina, cochlea, and sensory cells in the skin and joints.

一般法則として、感覚データは、まず、網膜や、蝸牛のような感覚器官や、皮膚と関節の感覚細胞で処理されます。

It then

goes to older brain regions that further process it and control basic behaviors.

それは、次に、古い脳領域に行き、そこで、さらに処理されて、基本的な行動が制御されます。

Somewhere along this path the

neurons split their axons in two and send one branch to the neocortex.

この通路のどこかで、ニューロンは、そのアクシオンを二つに分割し、一つのブランチを新皮質に送ります。

The sensory input to the neocortex is

literally a copy of the sensory data that the old brain is getting.

新皮質への感覚入力は、文字通り、古い脳が得る感覚データのコピーです。

●The second input to the neocortex is a copy of motor commands being executed by the old parts of the brain.

新皮質への二番目の入力は、脳の古い部分によって処理される運動コマンドのコピーです。

For example, walking is partially controlled by neurons in the brain stem and spinal cord.

例えば、歩行は、脳幹と脊髄のニューロンによって部分的に制御されています。

These neurons also

split their axons in two, one branch generates behavior in the old brain and the other goes to the

neocortex.

これらのニューロンは、また、そのアクシオンを二つに分割し、一つのブランチは、古い脳において行動を生み、もう一つのブランチは、新皮質に行きます。

Another example is eye movements, which are controlled by an older brain structure called the superior

colliculus.

もう一つの例は、眼の動きで、それは、上丘と呼ばれる古い脳構造によって制御されます。

The axons of superior colliculus neurons send a copy of their activity to the neocortex, letting the

neocortex know what movement is about to happen.

上丘のニューロンのアクシオンは、その活動のコピーを新皮質に送り、どんな運動が起ころうとしているのかを新皮質に知らせます。

This motor integration is a nearly universal property of

the brain.

この運動統合は、脳のほぼユニバーサルな特性です。

The neocortex is told what behaviors the rest of the brain is generating as well as what the sensors

are sensing.

新皮質は、感覚が何を見ているのかと同様に、脳の他の部分がどんな行動を生み出そうとしているのかを、告げられるのです。

Imagine what would happen if the neocortex wasn’t informed that the body was moving in some

way.

体がどう動こうとしているかを新皮質が知らされなかったら何が起こるか考えてみてください。

If the neocortex didn’t know the eyes were about to move, and how, then a change of pattern on the optic

nerve would be perceived as the world moving.

もし、眼がまさに、そして、どのように、動こうとしていることを新皮質が知らなかったら、目の神経上のパターンの変化は、世界が動いているように感じられるでしょう。

The fact that our perception is stable while the eyes move tells

us the neocortex is relying on knowledge of eye movements.

眼が動いているときに私達の感覚が定常だという事実は、新皮質が、眼の運動の知識に頼っていることを教えてくれます。

When you touch, hear, or see something, the

neocortex needs to distinguish changes in sensation caused by your own movement from changes caused by

movements in the world.

あなたが、何かを触り、聞き、見るとき、あなた自身の運動によって引き起こされた感覚の変化と、世界が動くことにより引き起こされた変化とを、新皮質は区別する必要があるのです。

The majority of changes on your sensors are the result of your own movements.

あなたの感覚への変化の大多数は、あなた自身の運動の結果です。

This

“sensory-motor” integration is the foundation of how most learning occurs in the neocortex.

この感覚-運動統合は、新皮質のなかで殆どの学習がいかに起こっているのかの基本です。

The neocortex

uses behavior to learn the structure of the world.

新皮質は、行動を使って、世界の構造を学習します。

Figure 4 showing sensory & motor command inputs to the neocortex (block diagram) (placeholder) 図4

●No matter what the sensory data represents - light, sound, touch or behavior - the patterns sent to the

neocortex are constantly changing.

感覚データが何を表現しようとも -光・音・触覚・行動-

新皮質に送られるパターンは、常時変化しています。

The flowing nature of sensory data is perhaps most obvious with sound, but

the eyes move several times a second, and to feel something we must move our fingers over objects and

surfaces.

感覚データの流れる性質は、多分、音で最も明確でしょう。しかし、眼も毎秒数回動きますし、何かを感じるためには、私達は、物や表面の上で指を動かさないといけません。

Irrespective of sensory modality, input to the neocortex is like a movie, not a still image.

感覚のモダリティとは関係なく、新皮質への入力は、動画まようで、静止画ではありません。

The input

patterns completely change typically several times a second.

入力パターンは、典型的には毎秒数回、完全に変化します。

The changes in input are not something the

neocortex has to work around, or ignore; instead, they are essential to how the neocortex works.

入力の変化は、新皮質が、なんとか対処したり、無視したりする何かではなく、新皮質がどう働くかにとって本質的なものです。

The neocortex

is memory of time-based patterns.

新皮質は、時間に基づいたパターンの記憶です。

●The primary outputs of the neocortex come from neurons that generate behavior.

新皮質の主要な出力は、行動を生むニューロンから来ます。

However, the neocortex

never controls muscles directly; instead the neocortex sends its axons to the old brain regions that actually

generate behavior.

しかし、新皮質は、決して、筋肉を直接制御しません。そのかわり、新皮質は、アクシオンを古い脳領域に送り、そこが実際に行動を生みます。

Thus the neocortex tries to control the old brain regions that in turn control muscles.

このようにして、新皮質は、古い脳領域を制御するよう試み、古い脳領域が、続いて、筋肉を制御します。

For

example, consider the simple behavior of breathing.

例えば、簡単な行動である呼吸を考えましょう。

Most of the time breathing is controlled completely by the

brain stem, but the neocortex can learn to control the brain stem and therefore exhibit some control of

breathing when desired.

殆どの時間、呼吸は、完全に脳幹によって制御されます。しかし、新皮質は、脳幹を制御することを学習することができ、希望するときに、呼吸をある程度制御することができます。

●A region of neocortex doesn’t “know” what its inputs represent or what its output might do.

新皮質のある領域は、その入力が何を表現し、その出力が何をするのかを「知り」ません。

A region doesn’t

even “know” where it is in the hierarchy of neocortical regions.

ある領域は、自分が新皮質の領域のどの階層にいるのかすら、「知り」ません。

A region accepts a stream of sensory data plus a

stream of motor commands.

ある領域は、感覚データの流れと、運動コマンドの流れを受け取ります。

From these inputs it learns of the changes in the inputs.

これらの入力から、ある領域は、入力データ内の変化について学習します。

The region will output a

stream of motor commands, but it only knows how its output changes its input.

その領域は、運動コマンドの流れを出力するでしょう。しかし、それは、出力が入力をどう変化させるかを知っているだけです。

The outputs of the neocortex

are not pre-wired to do anything.

新皮質の出力は、何かをするために予め配線されているわけではありません。

The neocortex has to learn how to control behavior via associative linking.

新皮質は、連想的な連結を通して、いかに行動を制御するかを学習しなければなりません。

●Every HTM system needs the equivalent of sensory organs.

すべてのHTMシステムは、感覚器官と等価なものを必要とします。

We call these “encoders.”

私達は、これを「エンコーダー」と呼びます。

An encoder takes some

type of data –it could be a number, time, temperature, image, or GPS location

– and turns it into a sparse

distributed representation that can be digested by the HTM learning algorithms.

エンコーダーは、あるタイプのデータをとります。それは、数、時間、温度、GPS位置情報などです。そして、それを疎分布表現に転換します。それは、HTM学習アルゴリズムによって消化可能です。

Encoders are designed for

specific data types, and often there are multiple ways an encoder can convert an input to an SDR, in the same

way that there are variations of retinas in mammals.

エンコーダーは、特定のデータ型用にデザインされています。そして、しばしば、エンコーダーが入力をSDRに変換できる方法は多重に存在します。哺乳類の網膜に、いろんな種類があるのと同様に。

The HTM learning algorithms will work with any kind of

sensory data as long as it is encoded into proper SDRs.

HTM

学習アルゴリズムは、任意の種類の感覚データで働きます。そのデータが、適切なSDRにエンコードされる限りにおいて。

●One of the exciting aspects of machine intelligence based on HTM theory is that we can create encoders for

data types that have no biological counterpart.

HTM

理論に基づいた機械知能のすばらしい側面の一つは、生物学的に対応するものがないデータ型についてもエンコーダーを造ることができることです。

For example, we have created an encoder that accepts GPS

coordinates and converts them to SDRs.

例えば、私達は、造りました|GPS座標データを受け取って、SDRに変換するエンコーダーを|。

This encoder allows an HTM system to directly sense movement

through space.

このエンコーダーは、HTMシステムが、直接に空間を通りぬける運動をセンスできることを可能にします。

The HTM system can then classify movements, make predictions of future locations, and detect

anomalies in movements.

HTM

システムは、運動を分類し、未来の位置を予測し、運動の異常を見地します。

The ability to use non-human senses offers a hint of where intelligent machines

might go.

非人間の感覚を使う能力は、知能機械がどこに進むかのヒントを与えます。

Instead of intelligent machines just being better at what humans do, they will be applied to problems

where humans are poorly equipped to sense and to act.

知能機械は、人間がすることに、より上手になるのではなく、人間が、感じたり行動するようには造られていない領域の問題に適用されるでしょう。

●To create an intelligent system, the HTM learning algorithms need both sensory encoders and some type of

behavioral framework.

知能システムを造るために、HTM学習アルゴリズムは、感覚エンコーダーと、ある種の行動枠組みの両方が必要です。

You might say that the HTM learning algorithms need a body.

あなたは、おっしゃるかもしれません|HTM学習アルゴリズムは、体が必要ですと|。

But the behaviors of the

system do not need to be anything like the behaviors of a human or robot.

しかし、システムの行動は、人間やロボットの行動のような何かを必要とはしません。

Fundamentally, behavior is a means

of moving a sensor to sample a different part of the world.

基本的に、行動は、世界の異なる部分のサンプリングをするためにセンサーを動かす一つの方法です。

For example, the behavior of an HTM system could

be traversing links on the world-wide web or exploring files on a server.

例えば、HTM システムの行動は、

●It is possible to create HTM systems without behavior.

HTM

システムを、行動無しに造ることは可能です。

If the sensory data naturally changes over time, then an

HTM system can learn the patterns in the data, classify the patterns, detect anomalies, and make predictions of

future values.

もし感覚データが、時間につれて自然に変化すると、HTM

システムは、データのパターンを学習し、パターンを分類し、異常を検出し、将来値を予測することができます。

The early work on HTM theory focuses on these kinds of problems, without a behavioral

component.

HTM

理論の初期の仕事は、この種の問題に集中し、行動の成分は持ちませんでした。

Ultimately, to realize the full potential of the HTM theory, behavior needs to be incorporated fully.

究極的に、HTM

理論の全ポテンシャルを実現するためには、行動を完全に組み込むことが必要です。

●The HTM learning algorithms are designed to work with sensor and motor data that is constantly changing.

HTM学習アルゴリズムは、常時変化しているセンサーデータと運動データで働くように設計されています。

Sensor input data may be changing naturally such as metrics from a server or the sounds of someone speaking.

センサー入力データも、サーバーからのメトリクスや、誰かがしゃべる音などのように、自然に変化しています。

Alternately the input data may be changing because the sensor itself is moving such as moving the eyes while

looking at a still picture.

交互に入力データは、変化しているかもしれません|例えば、静止画像写真を眺めながら眼を動かすように、センサー自体が動いているので|。

At the heart of HTM theory is a learning algorithm called Temporal Memory, or TM.

HTM 理論の心には、時間記憶、すなわち、TM

と呼ばれる学習アルゴリズムがあります。

As

its name implies, Temporal Memory is a memory of sequences, it is a memory of transitions in a data stream.

名前が示すように、時間記憶は、シークェンスの記憶です。それはデータの流れにおけるトランシジョンの記憶です。

TM is used in both sensory inference and motor generation.

TM

は、感覚の推測にも、運動の生成にも、使われます。

HTM theory postulates that every excitatory

neuron in the neocortex is learning transitions of patterns and that the majority of synapses on every neuron

are dedicated to learning these transitions.

HTM

理論は、仮定します|新皮質の興奮性ニューロンは、すべてパターンの推移を学習し、すべてのニューロンのシナプスの大多数はこれらの推移の学習に専念していることを|。

Temporal Memory is therefore the substrate upon which all

neocortical functions are built.

時間記憶は、それ故、その上にすべての新皮質の機能が建てられるサブストレイト(底質)です。

TM is probably the biggest difference between HTM theory and most other

artificial neural network theories.

TM は、HTM

理論と、他の殆どの人工ニューラル・ネットの理論の間での最大の違いです。

HTM starts with the assumption that everything the neocortex does is based

on memory and recall of sequences of patterns.

HTM

は、新皮質がなすすべてのことは、パターンのシークェンスの記憶と思い出しに基づくという仮定から出発します。

●HTM systems learn continuously, which is often referred to as “on-line learning”.

HTMシステムは、連続的に学習します。これは、オンライン学習と言われます。

With each change in the inputs

the memory of the HTM system is updated.

入力の変化毎に、HTMシステムの記憶は更新されます。

There are no batch learning data sets and no batch testing sets as

is the norm for most machine learning algorithms.

ありません|バッチ処理の学習データやテストデータは|多くの機械学習アルゴリズム基準である|。

Sometimes people ask, “If there are no labeled training and

test sets, how does the system know if it is working correctly and how can it correct its behavior?”

時に、人は尋ねます。「もしラベルの付いた訓練や試験セッットが無いとき、どうしてシステムは、自分が正しく働いているかどうかを知ったり、いかにしてその行動を正したりできるりですが?」と。

HTM builds a

predictive model of the world, which means that at every point in time the HTM-based system is predicting

what it expects will happen next.

HTM

は、世界の予測モデルを作ります。それは、意味します|任意の時点でHTMに依拠したモデルは、それが推定することが次に起こると予測します。

The prediction is compared to what actually happens and forms the basis of

learning.

予測は、実際に起きることと比較され、学習の基礎を形成します。

HTM systems try to minimize the error of their predictions.

HTM

システムは、その予測誤差を最小化するように努めます。

●Another advantage of continuous learning is that the system will constantly adapt if the patterns in the world

change.

もう一つの利点|連続学習の|、は、です|システムが常に適合すること|世界のパターンが変化すると|。

For a biological organism this is essential to survival.

生物学的有機体にとって、生き残ることが本質的です。

HTM theory is built on the assumption that

intelligent machines need to continuously learn as well.

HTM

理論は、知能機械も同じく連続的に学習することが必要であるという仮定のうえにたてられています。

However, there will likely be applications where we

don’t want a system to learn continuously, but these are the exceptions, not the norm.

しかし、私達は、システムが連続的に学習することを望まない応用もあるかもしれません。しかし、これらは例外であって、標準ではありません。

●The biology of the neocortex informs HTM theory.

新皮質の生物学は、HTM理論の情報を与えます。

In the following chapters we discuss details of HTM theory and continue to draw parallels between HTM and the neocortex.

以下の章で、私達は、HTM理論の詳細を議論し、HTMと新皮質と間の類似点の比較を続けます。

Like HTM theory, this book will evolve over time.

HTM理論と同じく、この本も時間とともに進化します。

At first release there are only a few chapters describing some of the HTM Principles in detail.

最初のリリースには、少しの章しかありません。

With the addition of this documentation, we hope to inspire others to understand and use HTM theory now and in the

future.

文献の追加と共に、皆さんが触発されて、HTM理論を理解し使用してくださることを期待します。

Crick, F. H.C. (1979) Thinking About the Brain. Scientific American September 1979, pp. 229, 230. Ch. 4 27

Poirazi, P. & Mel, B. W. (2001) Impact of active dendrites and structural plasticity on the memory capacity of neural tissue. Neuron, 2001 doi:10.1016/S0896-6273(01)00252-5

Rakic, P. (2009). Evolution of the neocortex: Perspective from developmental biology. Nature Reviews Neuroscience. Retrieved from http://www.ncbi.nlm.nih.gov/pmc/articles/PMC2913577/

Mountcastle, V. B. (1978) An Organizing Principle for Cerebral Function: The Unit Model and he Distributed System, in Gerald M. Edelman & Vernon V. Mountcastle, ed., 'The Mindful Brain' , MIT Press, Cambridge, MA , pp. 7-50

Zingg, B. (2014) Neural networks of the mouse neocortex. Cell, 2014 Feb 27;156(5):1096-111. doi: 10.1016/j.cell.2014.02.023

Herculano-Houzel, S. (2012). The remarkable, yet not extraordinary, human brain as a scaled-up primate brain and its associated cost. Proceedings of the National Academy of Sciences of the United States of America, 109 (Suppl 1), 10661–10668. http://doi.org/10.1073/pnas.1201895109

2016.10.9

http://numenta.com/assets/pdf/biological-and-machine-intelligence/0.4/BaMI-SDR.pdf

●In this chapter we introduce Sparse Distributed Representations (SDRs), the fundamental form of information

representation in the brain, and in HTM systems.

本章で、私達し、導入します|疎分布表現

(SDR) を|情報表現の基本形である|脳や、HTM

システムにおける|。

We talk about several interesting and useful mathematical properties of SDRs and then discuss how SDRs are used in the brain.

私達は、語ります|SDRのいくつかの興味深く数学的な特性を|、そして、議論します|いかにSDRが脳内で使われているかを|。

●One of the most interesting challenges in AI is the problem of knowledge representation.

AIにおける最も興味深い挑戦の一つは、知識表現の問題です。

Representing everyday facts and relationships in a form that computers can work with has proven to be difficult with

traditional computer science methods.

毎日の事実や関係をコンピュータが働ける形に表現することは、伝統的なコンピュータ科学の方法では困難であることが証明されました。

The basic problem is that our knowledge of the world is not divided into

discrete facts with well-defined relationships.

基本問題は、世界についての私達の知識が、よく定義された関係性をもった個々の事実に分割されていないことです。

Almost everything we know has exceptions, and the relationships